14. Vectorizing backpropagation (in theory)#

Now that we understand how to work with the Tensor class in practice, it’s high time that we pop the hood and remove all the screws.

I have some good news and some bad news for you. The good news is that regarding the computational graph structure, Tensor works the same as Scalar. Our vectorized computatinal graphs are made up of Tensor nodes and Edge connections.

Bad news is that derivatives are way more complex than for our scalar counterparts. We need to significantly up our linear algebra game to keep the pace.

Let’s get started.

14.1. Vector-scalar graphs#

As usual, let’s see the simplest possible example: the dot product. For any \( \mathbf{x}, \mathbf{y} \in \mathbb{R}^n \), its dot product is defined by

In other words, we input two vectors (of the same dimensions) and receive a scalar value. Here’s the graph:

Nothing special so far. Now, let’s calculate the derivative with backwards-mode differentiation! Following what we’ve learned so far, this is the graph we need to fill with exact values:

But what on earth do the symbols \( \frac{dz}{d\mathbf{x}} \) and \( \frac{\partial z}{\partial \mathbf{x}} \) mean? Can we take the derivative with respect to a vector?

Not so surprisingly, these represent

and

that is, we compressed a vector of derivatives into a single symbol. (In this example, the global derivative \( \frac{dz}{d\mathbf{x}} \) and the local derivative \( \frac{\partial z}{\partial \mathbf{x}} \) coincide. This won’t be always the case.)

For the dot product, the vectorized derivatives are

Sounds simple enough. What about vector-vector functions?

14.2. Vector-vector graphs#

Let’s dial the difficulty up just a notch and consider the vector addition: for any \( \mathbf{x}, \mathbf{y} \in \mathbb{R}^n \), the expression

defines the same V-shaped computational graph as dot product:

The derivative graph also looks the same, with one major difference: there are menacing vector-vector derivatives.

Fear not. It’s a similar notational trick, encoding a matrix of derivatives:

The matrix \( \frac{\partial \mathbf{f}}{\partial \mathbf{x}} \) is called the Jacobian of \( \mathbf{f} \). It’s an essential concept of differential calculus, and we’ll frequently encounter it throughout our journey.

We can use a similar notation for the global derivatives as well:

Following our notational conventions, let’s call \( \frac{\partial \mathbf{z}}{\partial \mathbf{x}} \) the local Jacobian, while \( \frac{d\mathbf{f}}{d\mathbf{x}} \) the global Jacobian.

Let’s make the definitions precise.

Definition 14.1 (The Jacobian)

Let \( \mathbf{y}: \mathbb{R}^n \to \mathbb{R}^m \) be an \( n \)-variable function mapping \( \mathbf{x} \in \mathbb{R}^n \) to \( \mathbf{y} \in \mathbb{R}^m \).

The Jacobian of \( f \) is defined by the matrix

Note that when the input and output dimensions don’t match, the Jacobian is not a square matrix. The dimension of the Jacobian is the output dimension times input dimension.

14.3. The vectorized chain rule#

Now that we understand the building blocks of vectorized computational graphs, let’s focus on the lead actor: the chain rule. Like always, it’s best to study it through an example. You already know the drill by now, so let’s start with the graph right away.

Let’s compute the derivative! Right away, we hit a snag. Unlike our previous examples, there’s not one, but two terminal nodes! Thus, we have two derivative graphs to fill up with values.

According to what we’ve learned when we introduced backpropagation, the first graph gives

while the second graph gives

So many symbols and operations; it’s not the most pleasant sights to look at. Do you recognize a pattern? Let me rewrite the above expressions in a matrix form:

In other words,

holds! With one brilliant stroke of linear algebra, we’ve contracted our computational graph from

to

where \( \mathbf{x}, \mathbf{y}, \mathbf{z} \in \mathbb{R}^2 \), and its derivative graphs from

to

where \( \frac{d\mathbf{z}}{d\mathbf{x}}, \frac{d\mathbf{z}}{d\mathbf{y}}, \frac{d\mathbf{z}}{d\mathbf{z}}, \frac{\partial \mathbf{y}}{\partial \mathbf{x}}, \frac{\partial \mathbf{z}}{\partial \mathbf{y}} \in \mathbb{R}^{2 \times 2} \) are \( 2 \times 2 \) matrices. That’s a massive improvement! I told you that linear algebra is powerful.

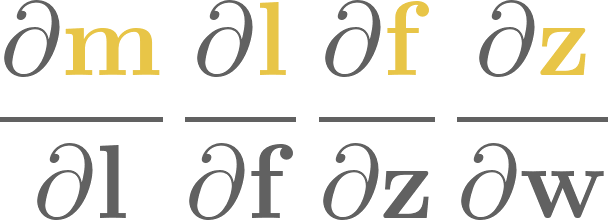

Going one step further, consider the vectorized computational graph defined by \( \mathbf{x} \in \mathbb{R}^n, \mathbf{y} \in \mathbb{R}^m, \mathbf{z} \in \mathbb{R}^k, \) and \( \mathbf{w} \in \mathbb{R}^l \):

(For simplicity, I have put the graph and its derivative graph onto the same figure.) In this case, the Jacobians have various dimensions:

and

According to the chain rule,

One more step. Now that we’ve dealt with vectorized computational graphs that consist of one path, it’s time to add some twists and forks. Take a look at the next graph, one that we’ve seen before:

Here, the chain rule says that

In theory, the vectorized backpropagation works the same as its vanilla counterpart, with matrices instead of scalars. Are we ready to roll with the implementation?

See you in the next chapter!